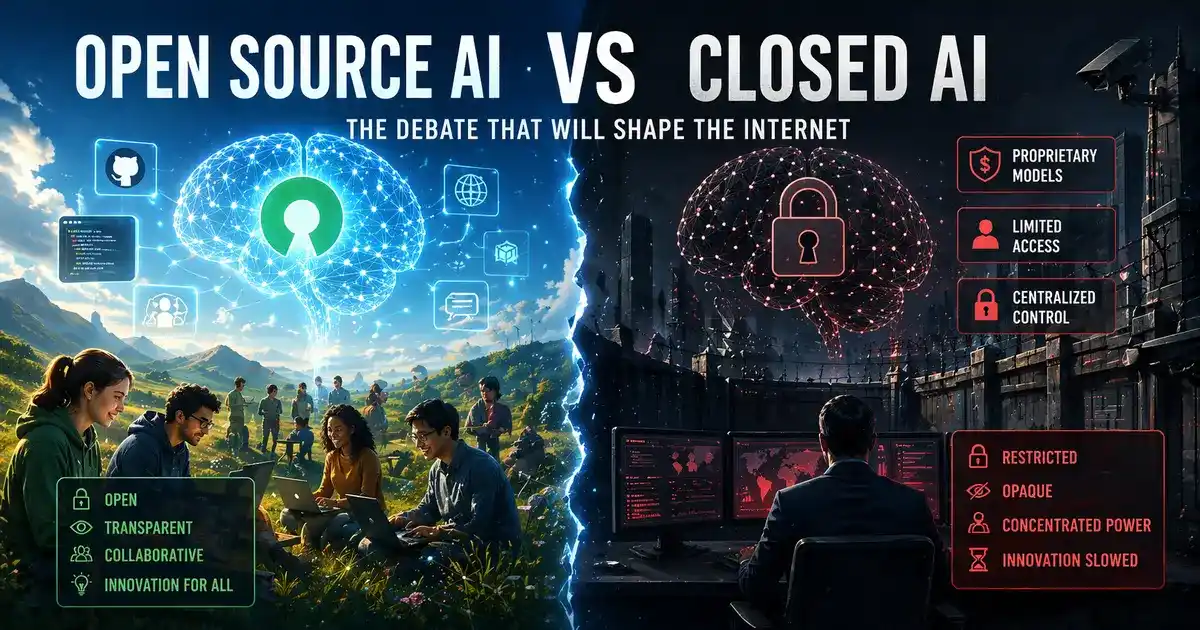

Open Source vs Closed AI: The Debate That Will Shape the Internet

There’s a war brewing in the world of artificial intelligence — not between humans and machines, but between two fundamentally different philosophies of how AI should be built, distributed, and controlled. The open source vs closed AI debate has been simmering for years, but in 2026, it has reached a full boil. The stakes couldn’t be higher: whoever wins this argument will have a massive say in how the internet evolves, who benefits from AI, and who holds the keys to one of the most powerful technologies ever created.

If you’ve been following AI even casually, you’ve noticed the tension. On one side, you have companies like Anthropic, OpenAI, and Google DeepMind building tightly controlled, proprietary models. On the other, you have a rapidly growing ecosystem of openly available models — Meta’s Llama family, Alibaba’s Qwen series, DeepSeek, Mistral, and dozens more — where anyone can download, modify, and deploy the model themselves.

This isn’t just a technical choice. It’s a political, economic, and ethical one — and the decision made at scale will shape the internet for decades.

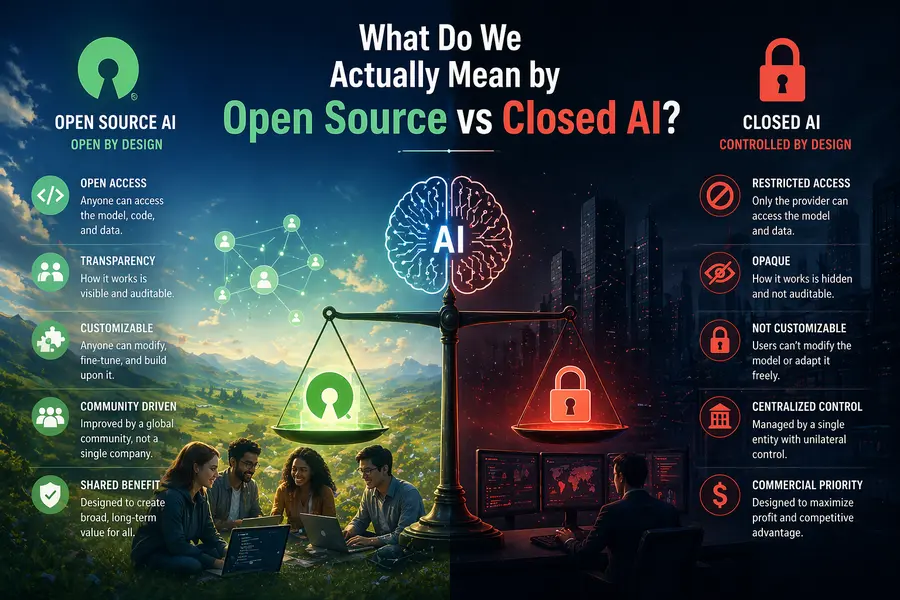

What Do We Actually Mean by Open Source vs Closed AI?

Before getting into the debate itself, it’s worth being precise about what these terms mean, because “open source” in AI is genuinely murkier than it sounds.

Closed AI models are proprietary. The weights, training data, and architecture are kept private. You access them through an API — pay per token, get results, but you never truly own the model or understand its internals. GPT-5, Claude Sonnet 4.6, and Gemini 3.1 Pro are classic examples.

Open-weight models — often loosely called “open source” — make the trained model weights publicly available. You can download and run them yourself, fine-tune them on your own data, and modify their behavior. Models like Llama 4, DeepSeek V3.2, and Qwen 3.5 fall into this category.

There’s a subtle but important distinction: true open source would also mean releasing training data and full training code. Very few models do this completely. But for most developers and businesses, the weight release is what matters — it’s what enables self-hosting, customization, and independence.

Where Things Stand in April 2026

The landscape has shifted dramatically. Just a year ago, the conventional wisdom was that frontier AI required closed models with nine-figure training budgets and tightly guarded architectures. That assumption is crumbling fast.

Open weights have won the volume war: Chinese open-weight providers now account for over 45% of OpenRouter traffic, with Xiaomi’s MiMo V2 Pro alone moving 4.79 trillion tokens per week as the top model by a 3x margin over anything else on the leaderboard.

April 2026 is also the month where frontier model capability hit a benchmark ceiling — the Artificial Analysis Intelligence Index has held at a composite score of 57 since Gemini 3.1 Pro Preview dropped in February, meaning no public lab has meaningfully broken through for months.

Meanwhile, open-source models have closed the gap so aggressively that the performance difference between a free self-hosted model and a $5/million-token proprietary API shrank to single-digit percentage points in most real-world tasks.

Seven open-weight models currently sit in the frontier tier as of April 2026, including MiMo V2 Pro from Xiaomi, MiniMax M2.7, DeepSeek V3.2, and Kimi K2.5 — all offering competitive performance at dramatically lower cost.

But closed AI hasn’t surrendered. Reasoning still favors closed source: Claude Opus 4.6, GPT-5.4 Pro, and Gemini 3.1 Pro Deep Think retain a meaningful lead on reasoning-heavy benchmarks like GPQA Diamond and Humanity’s Last Exam, typically by 3–8 percentage points.

The Case for Open Source AI

1. Cost Efficiency That Changes Who Can Play

The economics are simply staggering. Closed-source AI models like GPT-5 and Claude Sonnet 4 typically cost several dollars to tens of dollars per million tokens when accessed via API, while open-source models like Llama 4, DeepSeek-R1, and Qwen 3 can be deployed locally where the marginal cost of inference falls to just the price of electricity and hardware amortization.

Managed inference providers also offer open models via API at significantly lower cost — compare Groq’s $0.59/$0.79 per million tokens for Llama 3.3 70B against GPT-5.2’s $1.75/$14.00, a roughly 3–18x cost reduction while maintaining competitive quality for most tasks.

This matters enormously for startups, researchers in developing countries, and small enterprises that simply can’t write six-figure checks to AI giants every month.

2. Data Privacy and Sovereignty

Every time you send a prompt to a closed model’s API, that data travels to someone else’s server. For hospitals navigating HIPAA, financial institutions with customer data restrictions, government agencies, and defense contractors — this is not a theoretical concern. The data sovereignty challenge with closed models encompasses both immediate security concerns and fundamental questions of organizational independence, creating security vulnerabilities that prove particularly problematic for regulated industries.

Open-weight models let you run everything on your own infrastructure. Nothing leaves your walls.

3. True Customization and Fine-Tuning

Open models give you the ability to fine-tune on your own proprietary data — not just prompt-engineer around a fixed model. You can explore how these models rank on the Hugging Face Open LLM Leaderboard to find the best base model for your domain. As organizations continuously refine their models using proprietary data and domain expertise, they develop increasingly sophisticated AI assets that contribute meaningfully to sustainable competitive advantage.

The downstream effects are already visible. Researchers have built specialized models like Med-Qwen2-7B for clinical diagnostics and Fin-R1 for complex financial reasoning — purpose-built tools that outperform general-purpose closed models in their specific domains.

4. No Vendor Lock-In or Forced Migrations

This one stings for developers who’ve lived through it. OpenAI is planning to retire GPT-4o, GPT-4.1, and o4-mini, pushing developers toward GPT-5.x — but with open models, you control the version. You can freeze a model that works and run it indefinitely, with no deprecation notices, no forced migrations, and no breaking changes to your prompt stack.

Companies with hundreds of automated workflows built around a specific closed model have been caught off-guard by sudden API changes. Open models eliminate that risk entirely.

5. Community-Driven Innovation

Open-source LLMs thrive on community support — researchers and developers from around the world can contribute to development, propose improvements, and fix bugs, with this collaborative approach accelerating innovation as the community works together to refine and enhance models.

The pace of improvement in the open-source ecosystem over the past 18 months has been breathtaking precisely because of this distributed effort.

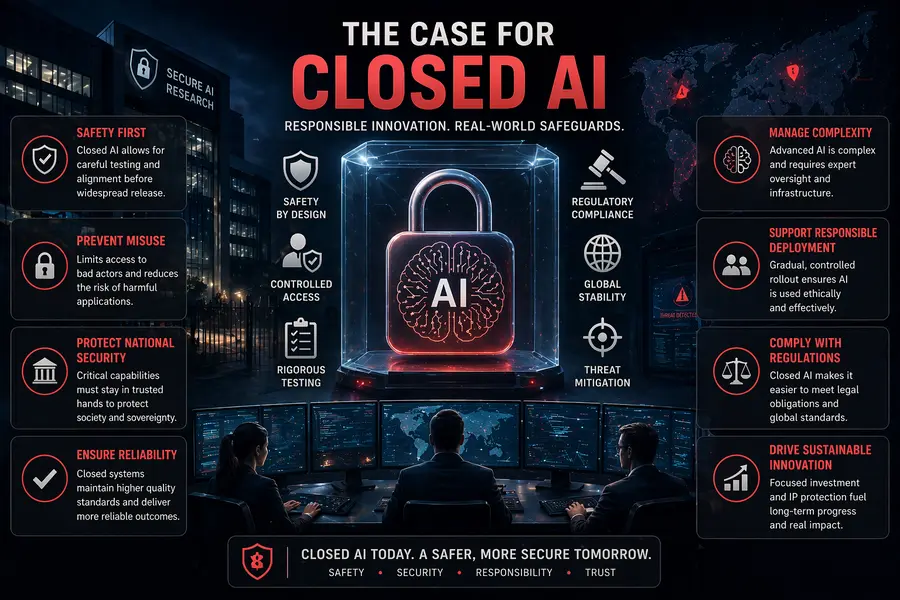

The Case for Closed AI

1. Frontier Reasoning and Multimodal Performance

The honest truth is that for the most demanding tasks, closed models still lead. Multimodal is the category where the closed/open gap remains widest in Q2 2026, with GPT-5.4 achieving native computer use at 75% on OSWorld-Verified, exceeding the 72.4% human baseline, while Gemini 3.1 Pro offers the strongest video understanding on the market.

For complex multi-step reasoning, frontier math, and long-horizon agentic workflows, closed models remain the safer default in 2026.

2. Simplicity and Speed of Deployment

With closed-source options like GPT-5 and Claude, you can prototype an AI product in minutes with just a simple API call — no GPUs to manage and no infrastructure to maintain.

For teams without dedicated ML engineers, this matters a lot. The operational overhead of running your own model infrastructure — monitoring, scaling, security patching, GPU management — is real and shouldn’t be underestimated.

3. Safety Guardrails and Alignment Work

Closed AI companies invest heavily in alignment research, red-teaming, and safety evaluation. This is difficult to replicate in community-maintained projects. In April 2026, Anthropic built a model it considered too dangerous to release publicly — locking it behind a 50-company firewall on safety grounds — which illustrates how seriously frontier labs take the question of what should and shouldn’t be freely distributed.

Whether you agree with those restrictions or not, the underlying concern is genuine: powerful models in the wrong hands can cause real harm.

4. Proprietary Advancements and Competitive Edge

With closed-source LLMs, businesses gain access to proprietary software advancements shielded by intellectual property rights — a competitive advantage that is crucial in sectors like finance or healthcare where having bespoke AI tools can significantly differentiate offerings.

Head-to-Head Comparison

Here’s a clear breakdown of how open source and closed AI stack up across the dimensions that matter most to developers and businesses:

| Dimension | Open Source / Open Weight AI | Closed / Proprietary AI |

|---|---|---|

| Cost | Low to near-zero at inference | $1–$70+ per million tokens |

| Data Privacy | Full control, on-premises possible | Data sent to external servers |

| Customization | Fine-tuning, full architecture access | Limited to API parameters & prompts |

| Reasoning Performance | Competitive, 3–8% behind top closed | Best-in-class for complex reasoning |

| Multimodal | Improving but still behind | Clear leader (GPT-5.4, Gemini 3.1) |

| Deployment Complexity | Requires infrastructure & ML expertise | API plug-and-play, minimal setup |

| Vendor Lock-in | None — you own the model | High — tied to provider’s roadmap |

| Safety & Alignment | Variable, community-dependent | Extensive red-teaming & guardrails |

| Version Control | Freeze any version indefinitely | Subject to deprecation & migration |

| Coding Tasks | Gap has effectively closed | Slight edge at the very top tier |

The Meta Moment: A Canary in the Coal Mine

One of the most telling signals of where this debate is heading came in early April 2026. On April 8, Meta Superintelligence Labs launched Muse Spark — Meta’s first proprietary, closed-weight AI model, abandoning the open-source-only positioning that made Meta’s AI strategy distinct.

This is a massive deal. Meta built its entire AI brand around being the “open” alternative to OpenAI and Anthropic. The fact that competitive pressure drove them to create a closed model suggests that even the most committed open-source advocates are feeling the financial strain of giving everything away.

The coexistence of Llama 4 (open) and Muse Spark (closed) suggests Meta is running a two-track strategy: open-source for ecosystem building and developer trust, proprietary for commercial revenue and competitive moat.

Simultaneously, Meta is also developing open-source versions of its next frontier models codenamed Avocado and Mango, with the open-source variants expected to be made available after the proprietary versions release and likely missing some features present in the closed editions.

This dual-track approach may well become the industry norm: closed models for maximum capability, open derivatives for community building.

The Regulatory Dimension

Governments are paying close attention. The EU AI Act, which came into full force in 2025, treats open-weight models with some complexity — they’re partially exempt from some requirements due to their open nature, but high-capability open models still face scrutiny.

In the US, debates in Washington about whether powerful open-weight models constitute a national security risk have intensified. Some policymakers argue that freely releasing the weights of a 700-billion-parameter model is equivalent to publishing instructions — you lose control of what people do with it. Others counter that restricting model distribution concentrates power dangerously in the hands of a few Silicon Valley companies.

China’s strategy adds another layer. Chinese open-weight providers now account for a dominant share of global developer traffic through the leading model aggregator, with Alibaba’s Qwen family, MiMo, MiniMax, Zhipu, DeepSeek, and StepFun together accounting for the majority of tokens processed each week. These are not small academic projects — they’re industrial-scale operations releasing frontier-quality models for free, which raises genuine questions about strategic intent and long-term geopolitical implications.

What This Means for the Future of the Internet

The open source vs closed AI debate isn’t just about which model you choose for your next project. It’s about the structural shape of the internet going forward.

If closed AI wins decisively, the internet risks becoming even more centralized than it already is. A handful of companies — likely American and Chinese tech giants — would control the foundational intelligence layer of the web. Every app, every service, every piece of content would depend on accessing their APIs under their terms.

If open source AI wins, you get something closer to what happened with Linux: a messy, distributed, sometimes inconsistent ecosystem, but one where no single entity holds a chokehold. Small companies can build on the same foundation as trillion-dollar corporations. Governments can run their own sovereign AI. Researchers can audit what’s actually happening inside models.

Looking toward 2027, the dichotomy between open and closed models is blurring — closed providers are opening more components like training methodologies, while open-source projects are developing managed service offerings.

The most likely outcome isn’t a clean winner. It’s a hybrid world where:

- High-stakes reasoning tasks (complex legal analysis, advanced medical diagnosis, frontier math) run on closed frontier models

- High-volume, cost-sensitive workloads (summarization, classification, coding assistance) increasingly run on open-weight models through cheap managed endpoints

- Privacy-sensitive applications (healthcare records, financial data, government systems) self-host open models with custom fine-tuning

- Consumer products continue using closed APIs for their simplicity and polish

This pattern of routing — sending simple queries to a self-hosted 7–14B model and escalating complex queries to a frontier closed model — typically handles 70–80% of requests on the cheap tier, cutting total costs by a similar percentage.

How to Choose the Right Approach for Your Use Case

If you’re building a product or deploying AI within your organization right now, here’s a practical framework:

Go with an open-weight model if:

- Your data is sensitive and can’t leave your infrastructure

- You need to fine-tune on proprietary or domain-specific data

- Cost at scale is a primary constraint

- You have the ML engineering capacity to manage deployment

- You need stable, versionable behavior over time

Go with a closed model if:

- You need plug-and-play speed and minimal engineering overhead

- Your tasks require frontier-level reasoning or multimodal understanding

- You’re building a prototype and want to move fast

- Your use case requires the very best coding or agentic performance

- Safety guarantees and support SLAs matter more than cost

Consider a hybrid strategy if:

- You have a mix of high-volume simple tasks and occasional complex ones

- You want cost optimization without sacrificing quality on important requests

- You’re building for scale and need to reduce API dependency over time

The Bottom Line

The open source vs closed AI debate won’t be resolved cleanly — and that’s actually okay. The technologies are complementary rather than purely competitive, and the best organizations in 2026 are the ones learning to use both intelligently.

What’s clear is that the era of closed AI having an overwhelming monopoly on capability is over. When a free, self-hostable model beats the best proprietary offerings from established Western labs on expert-level software engineering benchmarks, the era of closed-model dominance is functionally over for anyone willing to run their own infrastructure.

But closed AI isn’t going away. The safety arguments are real, the reasoning performance gap still exists at the frontier, and the simplicity of a well-maintained API is genuinely valuable. The giants will keep pushing the frontier — and the open ecosystem will keep following close behind, closing the gap at every step.

The internet is watching. The debate continues. And the choices made by developers, enterprises, and regulators over the next few years will determine whether AI becomes the most democratizing technology in history — or the most concentrated one.

Disclaimer

The information provided in this article is for general informational purposes only. While every effort has been made to ensure accuracy, the AI landscape evolves rapidly and some details may change after publication. Benchmark figures, market share data, and model capabilities referenced are based on publicly available sources as of April 2026. This article does not constitute professional, legal, or financial advice. Readers are encouraged to conduct their own research before making technology or business decisions.