How AI Is Rewriting the Rules of Student Learning

There’s a quiet revolution happening inside classrooms, dorm rooms, and libraries around the world — and it doesn’t involve a chalkboard. How AI is rewriting the rules of student learning is no longer a futuristic talking point for tech conferences. It’s happening right now, in real time, in ways that are reshaping how students study, how teachers teach, and how institutions think about education itself.

As of early 2026, the numbers tell a story that is hard to ignore. According to data from the Higher Education Policy Institute, 92% of students used AI in their studies in 2025 — up from just 66% in 2024. That kind of year-over-year leap doesn’t happen with a passing trend. It signals a fundamental shift in how the next generation learns, thinks, and solves problems.

This post digs into what’s actually driving that shift, what the research says about outcomes, where things are still messy, and what students and educators should realistically expect going forward.

The Numbers Don’t Lie: AI Adoption Has Hit a Tipping Point

If there’s one thing that stands out in the 2025–2026 data, it’s the sheer speed of adoption. Consider a few highlights:

- 86% of students globally now use AI as part of their regular study routine (Digital Education Council, 2026)

- In the United States alone, student AI usage for school-related tasks jumped 26% in a single school year

- The Coursera AI in Higher Education Report, released in February 2026, found that four in five students say AI has improved their academic performance

- ChatGPT alone is used by 66% of students worldwide for educational purposes — making it by far the most popular AI tool in classrooms

These aren’t fringe behaviors. Students aren’t sneaking AI into their work despite school rules. Many are doing it openly, encouraged by teachers and administrators who increasingly see the tools as essential. According to the same Coursera report, 70% of students believe AI will improve exam performance and the quality of higher education overall.

And yet, 63% say they use AI for less than half of their academic tasks — which actually suggests something important: most students aren’t outsourcing their thinking. They’re using it as a supplement, not a substitute. At least, that’s the goal.

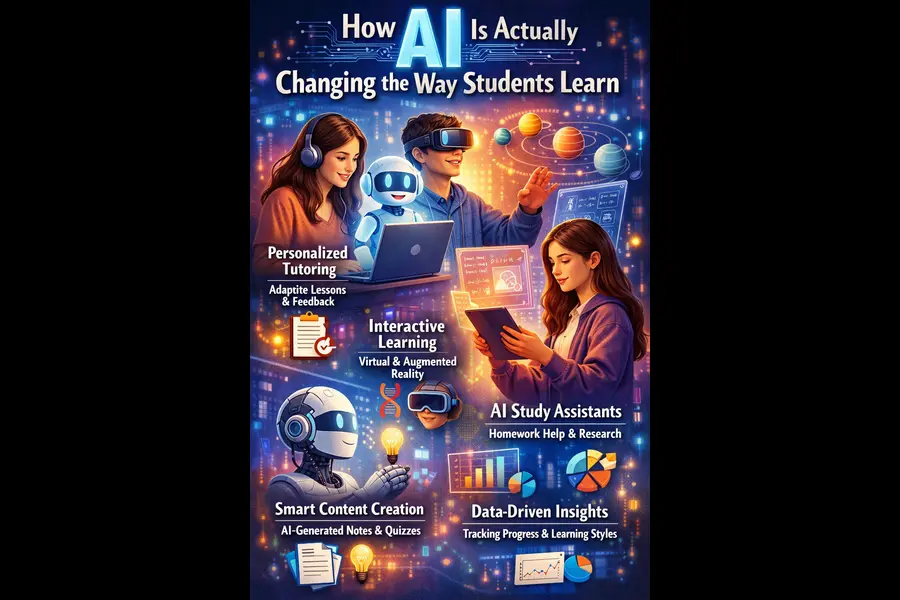

How AI Is Actually Changing the Way Students Learn

Beyond the headline stats, the more interesting question is how AI is changing things on a practical level. The answer is more nuanced than most headlines suggest.

Personalized Learning at Scale

One of education’s oldest challenges has been this: in a classroom of 30 students, every learner has a different pace, different strengths, and different gaps. A single teacher can’t realistically give each student individualized attention every single day. AI changes that equation.

Platforms built on adaptive learning algorithms can now adjust the difficulty of content in real time based on how a student is performing. If someone breezes through a math concept, the system moves them forward. If they’re struggling with a grammar rule, it loops back with different explanations until something clicks.

The outcomes research here is compelling. Students in AI-powered learning environments are achieving 54% higher test scores compared to those in traditional settings (Notie AI, 2026). A peer-reviewed randomized controlled trial published in Scientific Reports in June 2025 found that AI tutoring outperformed traditional in-class active learning with an effect size between 0.73 and 1.3 standard deviations — a result considered very strong in educational research. Notably, the AI group reached those results in a median of 49 minutes, compared to 60 minutes for students in a conventional classroom.

A Harvard University physics study echoed this: students using AI tutors learned more than twice as much in less time compared to peers in active-learning classrooms.

The upshot? AI-driven personalization isn’t just making learning more convenient. It’s making it demonstrably more effective for many students.

Instant, On-Demand Feedback

Ask any educator what the biggest lever for improving student outcomes is, and many will say the same thing: timely feedback. The problem is that feedback in traditional education is almost always delayed — essays handed back a week later, test scores returned after the lesson has moved on.

AI removes that lag entirely. Students get feedback on drafts, problem sets, and even spoken responses the moment they finish. This keeps the material fresh and allows students to correct misconceptions before they solidify into habits.

According to the Center for Democracy and Technology’s October 2025 report, 69% of teachers said AI tools have improved their teaching methods, and 55% agreed that AI has given them more time to actually interact with students one-on-one. That’s significant. If AI handles the routine feedback loop, teachers can focus on the conversations that require empathy, critical thinking, and human connection.

AI as a Brainstorming Partner

The most common uses of AI among students, according to the 2026 data, paint a picture of a brainstorming and research assistant rather than a ghostwriter:

- 53% use AI to gather facts and learning materials quickly

- 51% use it for idea generation and planning before writing

- 37% use it specifically to get past writer’s block on assignments

- 33% use it to condense long readings into digestible summaries

- 32% use it to get early feedback on drafts

The framing matters here. Students at Fulton County Schools in Georgia, one of the early districts to integrate Microsoft’s Copilot Chat across its 87,000 students, described using it as a brainstorming partner — “to ideate, but not to actually do our work for us.” That balance, when it works, is where AI genuinely enhances the learning process rather than short-circuiting it.

The Concerns Are Real — and Worth Taking Seriously

None of this comes without legitimate concerns, and glossing over them would do a disservice to anyone trying to make smart decisions about AI in education.

Academic Integrity Is Under Pressure

A January 2026 national survey by the American Association of Colleges and Universities found that 73% of college faculty had personally handled an academic integrity issue involving AI. That’s not a small number. Nearly 1 in 5 students, according to separate data, admit to leaving unedited AI-generated text in submitted work.

The question institutions are grappling with isn’t just “is this cheating?” — it’s more fundamental than that. When a student uses AI to draft an essay they barely edit, what are they actually learning? The tool becomes a crutch, not a scaffold. And there’s neurological evidence to back up the concern: an MIT study found that students who relied on external AI or search support showed “significantly different neural connectivity patterns” compared to those who worked independently — with external help correlating with decreased brain connectivity.

Critical Thinking and Deep Learning Are at Risk

A whopping 95% of college faculty reported concerns about student overreliance on AI and diminished critical thinking, according to the American Association of Colleges and Universities (January 2026). And 70% of teachers in the CDT survey worry that AI is weakening students’ core research and analytical skills.

These fears are not unfounded. There’s a meaningful difference between a student who uses AI to explore an idea more deeply and one who uses it to avoid thinking altogether. The former is developing a genuine skill. The latter is deferring it.

The Training Gap Is Widening

Here’s an uncomfortable irony in all of this: while students are racing ahead with AI adoption, the institutions meant to guide them are lagging badly. As of 2026, under half of students and teachers have received any formal guidance or training from their institutions on how to use AI responsibly.

Only 19% of educational institutions incorporate any AI skills training into their curriculum. That means roughly 5.89% of all educational institutions are actually preparing students to use these tools well. While 80% of high school educators report their students are receiving some AI literacy instruction, only 8% of Pre-K through 3rd grade students are getting the same — creating a developmental gap that could have long-term consequences.

The Teacher’s Role Isn’t Disappearing — It’s Evolving

One of the most persistent fears around AI in education is that it will replace teachers. The data, and the reality in classrooms, tells a different story.

What’s actually happening is a redistribution of effort. AI handles the repetitive, scalable parts of teaching: content delivery, routine feedback, quiz generation, summarization. Teachers, freed from some of that administrative weight, can redirect their energy toward what only humans can do well — building relationships, facilitating nuanced discussion, supporting students through confusion and failure, and connecting subject matter to real life.

Stanford Professor of Education Bryan Brown put it simply: “AI has the potential to support a single teacher who is trying to generate 35 unique conversations with each student.” That’s not replacement. That’s amplification.

As of 2026, 83% of K–12 teachers are using generative AI tools — primarily for lesson planning, feedback, and content creation. The teachers who are thriving in this environment aren’t the ones pretending AI doesn’t exist. They’re the ones who’ve figured out how to use it intentionally and teach their students to do the same.

What the AI Education Market Tells Us About the Direction We’re Heading

Follow the money, and the direction becomes clear. The global AI education market reached $7.57 billion in 2025 and is projected to hit $30.28 billion by 2029. That’s not venture capital speculation — it reflects genuine institutional investment from school districts, universities, and governments around the world.

Singapore’s “Smart Nation” initiative is training every teacher in the country on AI by 2026. The UAE’s Ministry of Education is rolling out a nationally aligned AI tutoring platform. Across the US, the College Board launched its GenAI Studio specifically to study responsible AI use in K–12 settings and develop evidence-based approaches that protect genuine learning.

The OECD’s 2026 Digital Education Outlook offered a pointed recommendation: move beyond general-purpose AI tools toward purpose-built educational AI — designed to produce durable learning gains, not just better task outputs. That distinction matters enormously.

What This Means for Students Right Now

If you’re a student reading this, here’s what the research and the reality actually suggest:

Use AI as a thinking partner, not a replacement for thinking. The students getting the most out of AI are the ones who bring their own ideas to the table first, then use AI to pressure-test, expand, or refine them. The ones falling behind are those treating it like an answer machine.

Build AI literacy deliberately. Knowing how to use ChatGPT is not the same as understanding how to evaluate its outputs, recognize its biases, or know when it’s confidently wrong. That critical layer of skill is what will differentiate students in the workforce of 2030.

Don’t outsource the struggle. Some of the most valuable learning happens in the friction — in the moments when you don’t immediately know the answer and have to work through it. Removing that struggle entirely may produce a better essay in the short term, but it hollows out the deeper competencies you’ll need later.

The Bigger Picture: Rules Are Being Rewritten, Not Erased

There’s a tendency in conversations about AI and education to frame everything as either utopian or apocalyptic — AI will either save education or destroy it. Neither extreme is particularly useful.

What’s actually happening is more interesting and more complicated. AI is forcing education to do something it has resisted for decades: ask hard questions about what learning is actually for. If AI can pass bar exams, write essays, and solve calculus problems, what does it mean to educate a human being? What skills, habits of mind, and capacities should schooling develop that AI genuinely can’t replicate?

The answers to those questions — curiosity, judgment, empathy, ethical reasoning, the ability to sit with ambiguity — aren’t going anywhere. But the path to developing them may look very different in 2030 than it did in 2015. And that’s a conversation worth having seriously, rather than reactively.

AI isn’t the end of education. It may, if we’re thoughtful about it, be the beginning of a more honest version of it.

Disclaimer

The information provided in this article is for educational and informational purposes only. While every effort has been made to ensure accuracy, some statistics and data points may evolve as AI adoption in education continues to change rapidly. The sources cited are third-party organizations and publications; we do not claim ownership of their data or findings. This article does not constitute academic, legal, or professional advice. Readers are encouraged to verify information directly with the original sources before making any institutional or policy decisions.

Frequently Asked Questions

Is AI really changing how students learn?

Yes — over 86% of students globally now use AI as a regular part of their study routine as of 2026.

Does AI help students perform better academically?

Research shows students in AI-powered learning environments score up to 54% higher on tests compared to traditional settings.

Are teachers being replaced by AI in education?

No — AI is amplifying what teachers do, handling routine tasks so educators can focus more on meaningful student interaction.

Is using AI for studying considered cheating?

It depends on how it’s used — using AI as a thinking partner is encouraged, but submitting unedited AI-generated work violates most academic integrity policies.

What is the biggest risk of AI in student learning?

Overreliance — when students use AI to avoid thinking altogether rather than using it to deepen their understanding.

Also Read

Elive 3.8.50 LTS Released: The Lightweight Linux That Breathes New Life into Old PCs